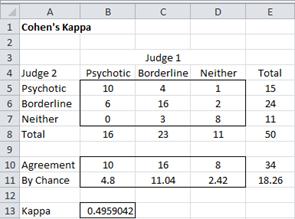

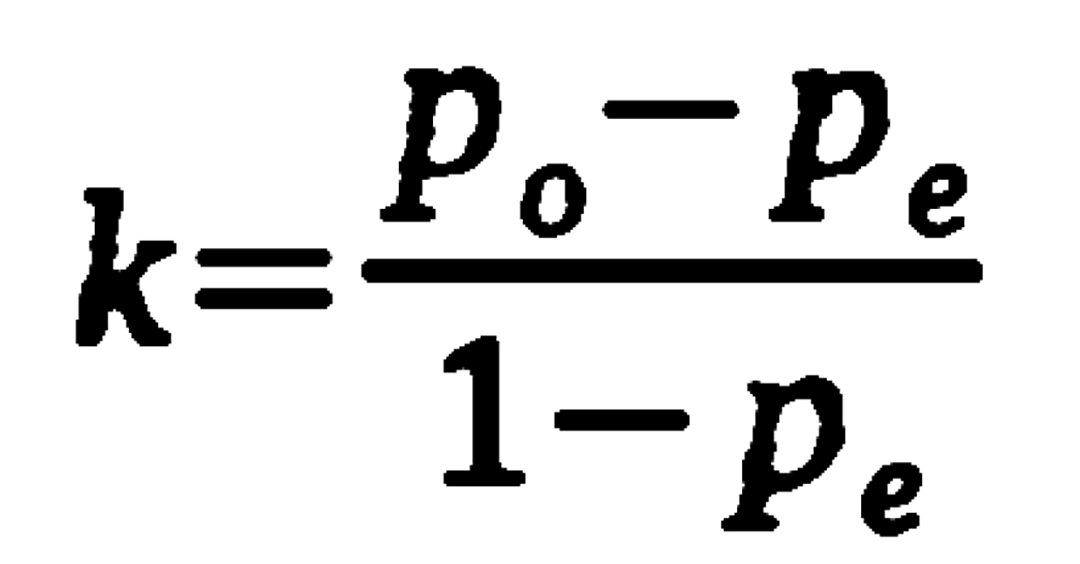

Understanding the calculation of the kappa statistic: A measure of inter-observer reliability Mishra SS, Nitika - Int J Acad Med

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

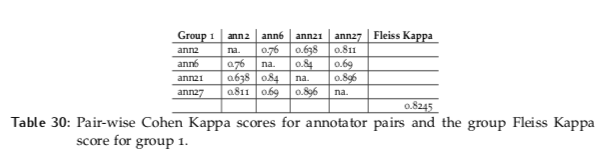

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

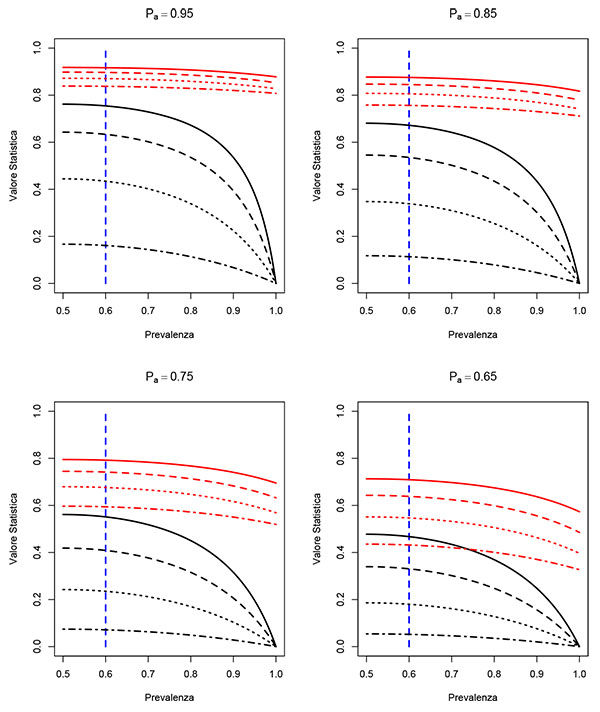

![PDF] Cohen's quadratically weighted kappa is higher than linearly weighted kappa for tridiagonal agreement tables | Semantic Scholar PDF] Cohen's quadratically weighted kappa is higher than linearly weighted kappa for tridiagonal agreement tables | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/5df092de279231383db41edabbc6e93624b302b6/2-Table1-1.png)

PDF] Cohen's quadratically weighted kappa is higher than linearly weighted kappa for tridiagonal agreement tables | Semantic Scholar