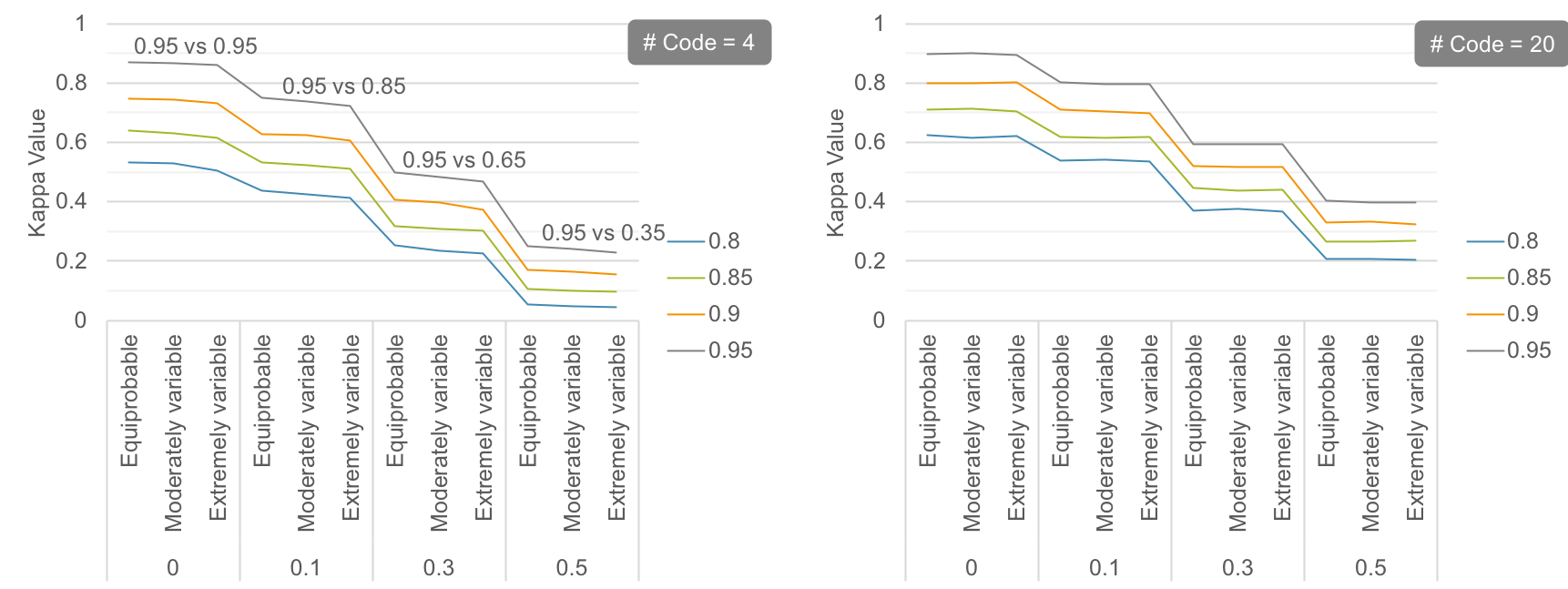

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

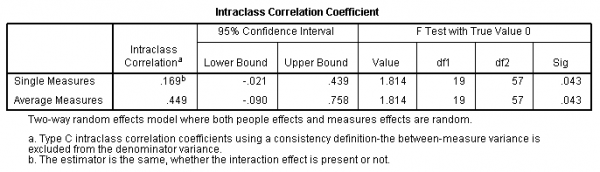

Interrater reliability and interrater agreement (ICC's) and Cronbach's... | Download Scientific Diagram

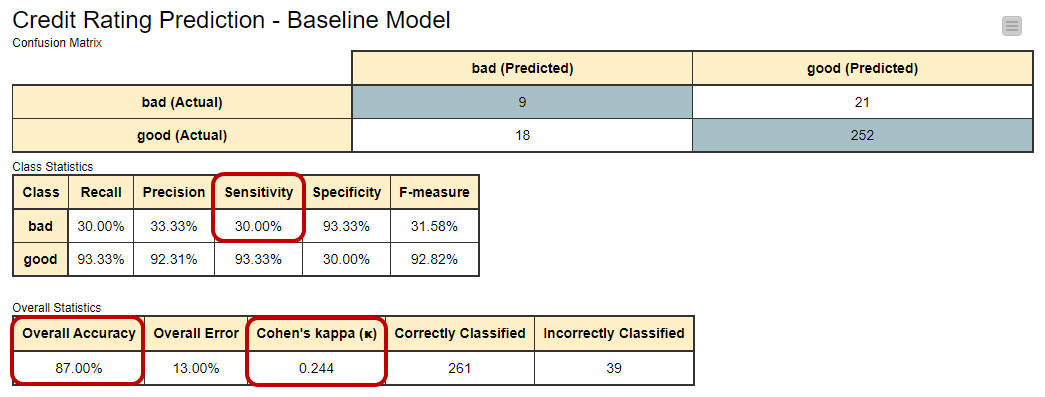

Desarrollo, confiabilidad test-retest y validez del Pharmacy Value-Added Services Questionnaire (PVASQ)

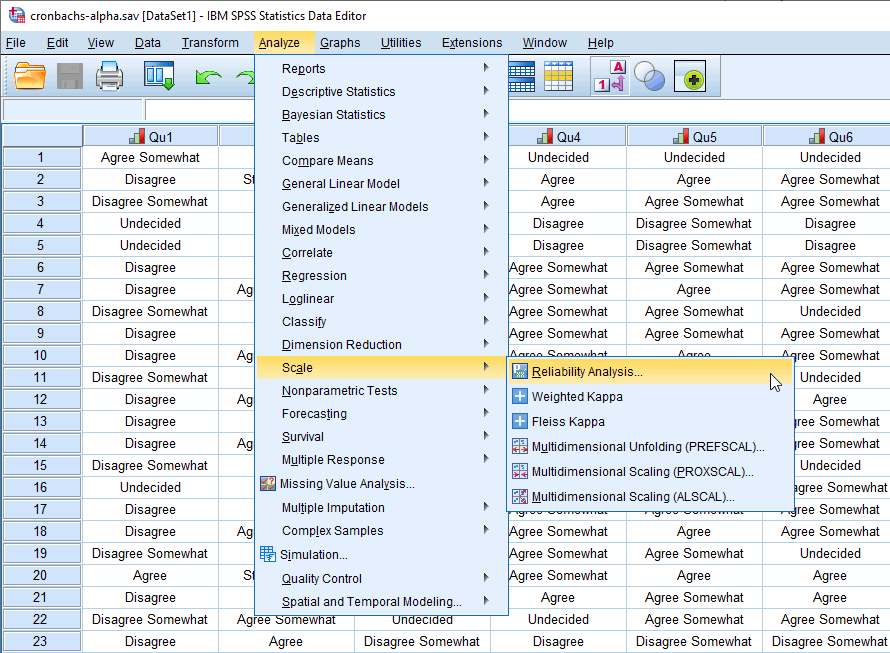

Cronbach's Alpha in SPSS Statistics - procedure, output and interpretation of the output using a relevant example | Laerd Statistics.

Development, test-retest reliability and validity of the Pharmacy Value-Added Services Questionnaire (PVASQ)

Development, test-retest reliability and validity of the Pharmacy Value-Added Services Questionnaire (PVASQ)